Symbolic picture: Colourbox.

Argumentation is omnipresent, whether in politics, on the job market or in private life. ‘We exchange arguments in all kinds of situations,’ says Professor Annette Hautli-Janisz, assistant professor (Juniorprofessor) of Natural Language Processing at the University of Passau. At the start of the lecture series on ‘Artificial intelligence – between hype and reality’, which is taking place in June and July at the University of Passau, she discussed with Professor Henning Wachsmuth from the Institute for Artificial Intelligence at the University of Hanover how good Large Language Models (LLMs) are at arguing – and where their limits lie.

Both researchers come from the field of Natural Language Processing, which is a subfield of Artificial Intelligence that deals with the understanding and generation of language by machines.

What LLMs can and cannot do – insights into ongoing research

How LLMs can be tamed: Professor Henning Wachsmuth and his team investigated whether Large Language Models are capable of defusing offensive statements without changing their content. To do this, they had an AI model and humans revise comments, which they then presented to humans for evaluation in a second step. The machine-generated versions were actually rated as more successful than the human revisions. In a further step, they tested the extent to which the LLM could solve any argumentative tasks. Here, the AI model reached its limits: the systems are not designed to support critical thinking, but to follow instructions as best as possible, according to the researcher.

LLMs for impersonating politicians: A team led by Professor Hautli-Janisz and Steffen Herbold, Professor of AI Engineering at the University of Passau, studied whether LLMs can convincingly impersonate politicians. The researchers used a data set of written questions and answers from the British political talk show BBC Question Time. They also had an LLM generate artificial answers and presented the versions to British citizens for evaluation. The respondents were initially unaware that a machine was being used. One outcome was that the participants consistently rated the imitated responses as more authentic, coherent and relevant than the politicians' statements. Professor Hautli-Janisz concludes that 'LLMs are so good that they can demonstrably mislead people'. The study has been published as a preprint and is currently undergoing peer review.

AI as a probation judge in the justice system: A team led by Professor Hautli-Janisz investigated how LLMs perform as parole commissioners. The reason: In the US state of Pennsylvania, artificial intelligence is already being used to support court decisions. The researchers used a dataset of parole hearings from the US state of California that is publicly available. They anonymized the hearing transcripts and let the LLM make decisions based on them. The result: ‘In 60 percent of cases, the AI model replicated the parole commissioner’s decision.’ In 20 percent of cases, however, the generative model made stricter decisions and denied parole. The researcher emphasized that the use of AI in the administration of justice raises major ethical questions. The study has not yet been published.

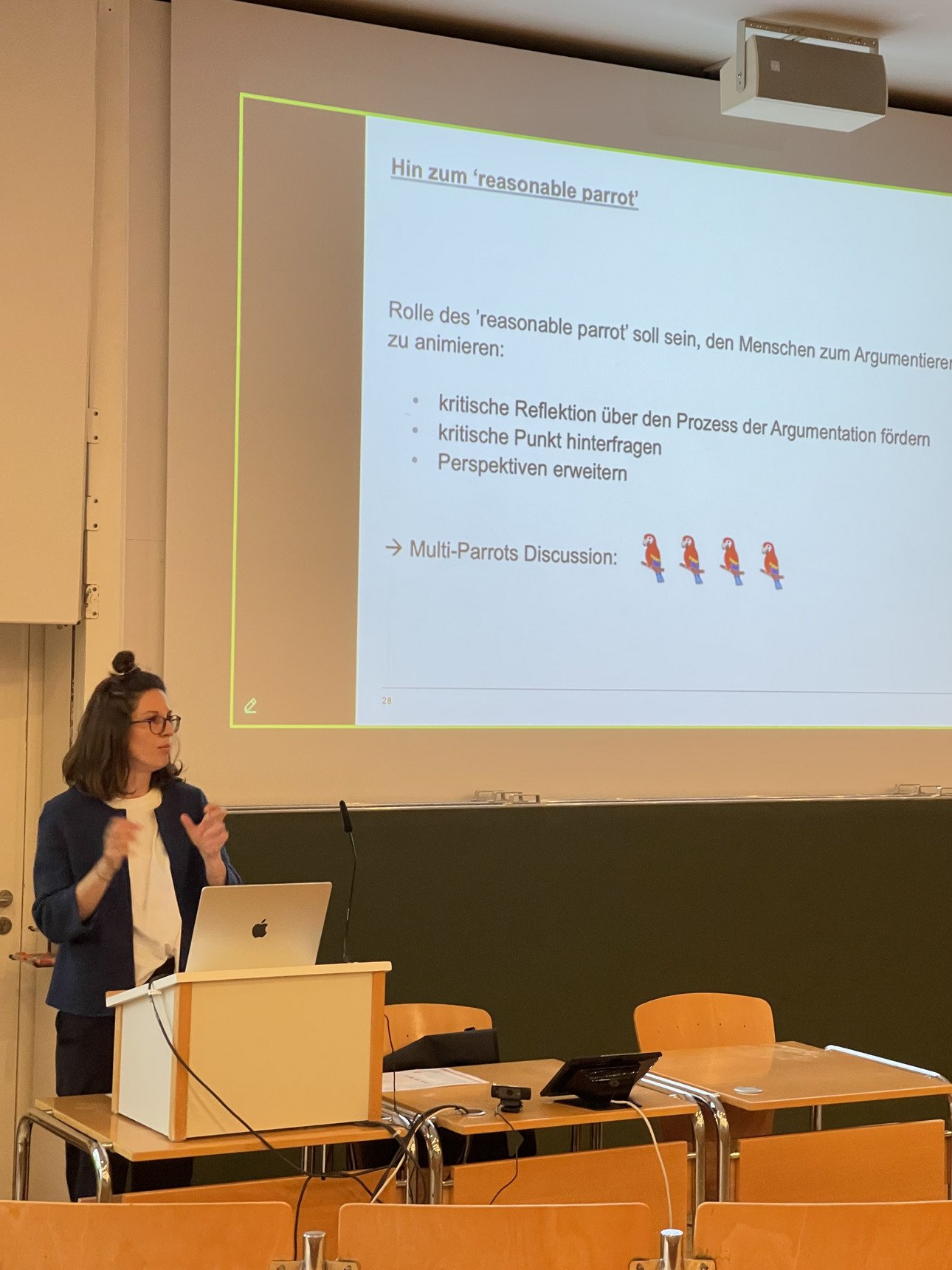

Idea – LLMs as intelligent parrots

LLMs can be rhetorically skillful, but they only follow instructions and do not encourage critical thinking. Can this be changed? An interdisciplinary research team, including Professor Hautli-Janisz and her guest Professor Wachsmuth, has developed an idea – with a particular focus on the use of technology by children and young people. The researchers condition the AI model to take on the role of different philosophical parrots and engage users in critical discussions. The approach could open up new avenues for an educationally valuable access to the technology.

These topics are just a small excerpt from the field of computational linguistics. A glance at the figures shows just how large this field is: according to Professor Hautli-Janisz, 10,900 research papers with the keyword ChatGPT were published until May 2025 alone – and 27,800 with the abbreviation LLM. Compared to the same period last year, this represents almost a doubling or tripling.

Conclusion: Are LLMs capable of argumentation?

LLMs may seem convincing, but the technology is based on ‘argumentum ad populum’, or popular opinion, explains Professor Hautli-Janisz. This means that anything that occurs often enough in the training data is considered plausible by the model – a classic fallacy: a statement is justified by the fact that a majority believes it. ‘To master argumentation, you not only have to be able to generate it, but also to analyze it,’ says Professor Hautli-Janisz. Language models can do this for individual, specific tasks. However, it is still difficult to make general statements about their performance.

This text was machine-translated from German.